Discover how Transformers improve time series prediction with self-attention, scalability, and accuracy. Learn why they’re outpacing traditional forecasting models.

Excerpt of Can Transformers Improve Time Series Prediction?

Transformers, originally designed for natural language processing tasks, have shown significant potential in time series prediction. Their self-attention mechanism enables them to capture long-term dependencies and patterns in sequential data more effectively than traditional models like ARIMA or LSTMs. By leveraging Transformers, researchers and businesses can achieve more accurate and robust forecasts, even with complex and irregular time series datasets.

Advantages of Using Transformers for Time Series Prediction

- Long-Term Dependency Capture: Self-attention mechanisms excel in understanding long-range relationships.

- Scalability: Handles large and complex datasets efficiently with parallel processing.

- Versatility: Adapts to multivariate time series data across industries.

- Improved Forecast Accuracy: Outperforms traditional models in dynamic and non-linear datasets.

- Transfer Learning Potential: Pre-trained transformer models can be fine-tuned for time series tasks.

Transformers in Time Series Prediction: A Revolutionary Advancement

Time series prediction has seen significant advancements in recent years, particularly with the introduction of transformer-based models. Accurate predictions in time series data can make a huge difference in today’s fast-paced world, aiding in areas such as forecasting, financial analysis, and weather prediction.

Traditional forecasting methods often fall short when it comes to capturing the dynamic changes and complex patterns in data. This is where transformers, originally developed for natural language processing (NLP), come into play.

Can Transformers Improve Time Series Prediction?

The short answer is yes. In this article, we explore the capability of transformers to enhance time series predictions and their application across various domains. Let’s dive into the possibilities of transformers in time series prediction.

What Are Transformers?

Transformers are advanced machine learning algorithms originally designed for NLP tasks such as text translation and sentiment analysis. However, their unique architecture also makes them suitable for time series data processing.

Here’s how transformers enhance time series prediction:

- Data Transformation: Transformers can convert time series data into a form that is easier to interpret and analyze.

- Feature Extraction: They can automatically extract features from time series data, capturing complex patterns that traditional methods might miss.

- Improved Efficiency: Transformers can analyze large amounts of data, reducing the computational overhead required for traditional time series models.

Why Are Transformers Effective for Time Series Prediction?

Transformers have unique characteristics that make them effective for time series prediction:

- Attention Mechanism: Transformers use an attention mechanism that focuses on relevant parts of the data, improving the model’s ability to capture dependencies over long sequences. Learn more about the attention mechanism here.

- Parallel Processing: Unlike recurrent neural networks (RNNs), transformers process data in parallel, which is faster and more efficient for large datasets.

- Scalability: Transformers are highly scalable and can handle extensive and complex time series datasets, making them suitable for industrial-scale applications.

Applications of Transformers in Time Series Prediction

Transformers are transforming time series prediction in various domains:

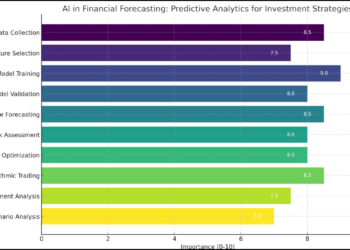

- Financial Forecasting: Predicting stock prices and market trends with higher accuracy.

- Weather Prediction: Improving long-term weather forecasts by analyzing complex climatic patterns.

- Healthcare: Monitoring and predicting patient health metrics over time for proactive care.

- Energy Management: Forecasting energy consumption to optimize resource allocation and reduce costs.

Conclusion

Transformers represent a significant leap forward in time series prediction, enabling more accurate and efficient forecasting in various fields. By leveraging their advanced capabilities, businesses and researchers can make informed decisions, optimize processes, and improve outcomes.

To learn more about the technical aspects of transformers, visit The Attention Is All You Need, the groundbreaking paper that introduced transformers to the world of AI.

The Transformer Architecture

The original Transformer is a sequence-to-sequence model designed in an encoder-decoder type of configuration that takes as input a sequence of words from the source language and then generates the translation in the target language.

The Machine Learning algorithms are limited by the data tracking and how far a data sample will impact during the learning. In some cases, the auto-regressive nature of machine learning model training leads to memorizing past observations rather than generalizing the training examples to new data. Transformers address these challenges using self-attention and positional encoding techniques to jointly attend to and encode the order information as they analyze current data samples in the sequence. These techniques keep the sequential details intact for learning while eliminating the classical notion of recurrence. This strategy allows Transformers to exploit the parallelism provided by GPUs and TPUs.

Encoder In PYTORCH

class Encoder(nn.Module): #@save

"""The base encoder interface for the encoder--decoder architecture."""

def __init__(self):

super().__init__()

# Later there can be additional arguments (e.g., length excluding padding)

def forward(self, X, *args):

raise NotImplementedError

How Transformers Are Used for Time Series Prediction?

Transformers, originally developed for natural language processing (NLP), have proven to be powerful tools for time series prediction. They offer significant advantages over traditional models like ARIMA and recurrent neural networks (RNNs). Let’s dive into how transformers utilize architectures like Encoder-Decoder and Attention Is All You Need (AIANY) to improve time series prediction.

Transformer Architectures for Time Series Prediction

1. Encoder-Decoder Architecture

The Encoder-Decoder architecture is a popular transformer model used for sequential data prediction:

- Encoder: Reads the input sequence and generates a fixed-length vector representation.

- Decoder: Utilizes this vector to predict the output sequence.

This architecture is ideal for processing time series data, enabling the transformation of sequential inputs into meaningful predictions.

2. Attention Is All You Need (AIANY)

The AIANY architecture enhances the Encoder-Decoder model by using an attention mechanism. This allows the decoder to focus on specific parts of the input sequence when predicting the output, resulting in better accuracy and contextual understanding.

Building Transformers Using Encoders and Decoders

The Encoder Block

The encoder block is a crucial part of the transformer architecture:

- Includes twin-head self-attention layers and feed-forward layers.

- Utilizes residual connections to stabilize training and accelerate convergence.

- Applies layer normalization for faster training on sequential data.

- Employs ReLU activation and linear layers for efficient feature transformation.

- Processes input via word embedding and positional encoding vectors.

The Decoder Block

The decoder block mirrors the encoder block with additional functionalities:

- Accepts two inputs: one from the previous decoder and one from the encoder.

- Includes multi-head self-attention and encoder-decoder attention layers.

- Generates key-value pairs from encoder outputs for improved prediction accuracy.

Masking in Self-Attention

During training, masking ensures that the decoder does not access future data points. This prevents “data leakage” and improves the model’s reliability during predictions.

Benefits of Using Transformers for Time Series Prediction

1. Handling Long-Range Dependencies

Transformers excel at capturing long-range dependencies within sequential data, which is essential for accurate predictions.

2. Transfer Learning

Transformers can utilize transfer learning, allowing them to leverage pre-trained knowledge for improved performance in time series tasks.

3. Reduced Overfitting

Compared to traditional statistical models like ARMA, transformers are less prone to overfitting, making them more reliable for time series prediction.

4. Improved Accuracy

By effectively learning complex patterns and relationships in data, transformers provide higher accuracy in predicting time series.

Transformers have revolutionized time series prediction, offering a robust alternative to traditional models. Their ability to process long sequences, adapt through transfer learning, and avoid overfitting makes them indispensable in domains like financial forecasting, healthcare, and weather prediction. As the technology evolves, transformers are set to play an even more significant role in handling sequential data and making informed predictions.

Use Cases of Transformers in Time Series Prediction

Transformers have proven to be powerful tools in time series prediction across various domains. Their ability to analyze patterns and trends in sequential data makes them highly effective for accurate forecasting. Here are some common use cases:

1. Field of Finance

Transformers are extensively used in the financial sector for predictions such as:

- Stock prices

- Exchange rates

- Currency movements

- Other economic indicators

By analyzing historical data, transformers identify patterns and trends that help traders, marketers, and financial analysts make informed decisions. Learn more about predicting market behavior.

2. Field of Weather Forecasting

Meteorologists rely on transformers to analyze historical weather patterns and predict upcoming conditions with greater accuracy. This is crucial given the rise in unpredictable and extreme climate events. The advanced algorithms in transformers enable meteorologists to:

- Understand complex weather systems

- Warn about impending climate changes

- Provide actionable insights to mitigate risks

Explore how AI aids in climate forecasting.

3. Field of Healthcare

Transformers contribute significantly to healthcare by analyzing patient data for potential health risks. Use cases include:

- Identifying patterns that indicate developing conditions

- Improving treatment plans with predictive insights

- Enhancing patient outcomes through early detection

Discover more about AI in healthcare analytics.

Challenges with Time Series Prediction Using Transformer Models

While transformers offer numerous advantages, they also come with challenges:

1. Training Complexity

Transformer models are large and require significant computational resources. They demand:

- Time and effort to train

- Consistency in optimization

Learn about training transformer models.

2. Dependency on Data

Transformers need vast amounts of data to establish correlations between input and output sequences. Insufficient data can lead to inaccurate predictions.

3. Prediction Speed

Prediction can be slow as transformers process the entire input sequence before generating outputs. This impacts real-time decision-making in some scenarios.

4. Capturing Long-Term Dependencies

Transformers sometimes struggle with long-term dependencies in time series data, as they are designed to focus on short-term patterns rather than global trends. This can limit their effectiveness in analyzing data over extended periods.

Conclusion

The use of transformers in time series prediction is expanding rapidly, providing accurate and actionable insights in finance, weather forecasting, healthcare, and more. Despite challenges such as training complexity and long-term dependency capture, transformers remain a transformative tool in sequential data analysis.

For more insights, explore The Attention Is All You Need, the foundational paper on transformer architecture.

Conclusion

All in one, Transformers have the unparalleled potential to improve the accuracy of time series forecasting. Therefore, it can become an effective tool for any organization that needs accurate and reliable predictions around various aspects of their business operations. Research on transformer-based models is still going on to gain the potential from the new approach.

Transformers leverage self-attention mechanisms to capture long-term dependencies and patterns, enabling more accurate forecasts in complex datasets.

Yes, in many cases. Transformers handle long-range dependencies better and process data in parallel, making them more efficient and accurate for certain time series tasks.

Industries like finance, healthcare, energy, and supply chain management can leverage Transformers for better demand forecasting, anomaly detection, and trend analysis.

While Transformers excel with large datasets, transfer learning allows them to perform well on smaller datasets by fine-tuning pre-trained models.